The number of qubits required to execute Shor's algorithm is dropping due to breakthroughs in high-rate quantum low-density parity-check (qLDPC) codes, which provide encoding rates of approximately 30% compared to the 4% seen in smaller surface codes. By combining these advanced error-correction protocols with optimized circuit designs and neutral-atom processors, researchers have demonstrated that the threshold for breaking modern encryption has plummeted from millions of physical qubits to just 10,000. This research, authored by Lewis R. B. Picard, Manuel Endres, and Dolev Bluvstein, fundamentally shifts the timeline for the "Quantum Doomsday," suggesting that cryptographically relevant computations are closer than previously estimated.

The Cryptography Threshold and the Million-Qubit Myth

RSA-2048 encryption has long served as the gold standard for securing global digital communications, relying on the mathematical difficulty of factoring large integers. For decades, the consensus within the scientific community was that a quantum computer would require millions of physical qubits to successfully run Shor's algorithm at this scale. This "million-qubit" milestone acted as a security safe harbor, leading many to believe that the threat to cryptography remained decades away.

The historical reliance on this high qubit count was primarily due to the massive overhead required for quantum error correction. Traditional surface codes, while robust, are notoriously inefficient, requiring thousands of physical qubits to represent a single stable logical qubit. However, the study led by Manuel Endres and his colleagues demonstrates that this overhead can be reduced by one to two orders of magnitude through the use of reconfigurable hardware and high-rate codes, effectively shattering the million-qubit assumption.

What makes neutral-atom processors better for error correction in quantum computing?

Neutral-atom processors excel in error correction because they utilize reconfigurable atomic qubits that support short-range connectivity and low-weight stabilizers. Unlike superconducting circuits, these systems can handle hardware-realistic error models, such as heralded atom loss and biased Pauli noise, which can reduce effective error rates by a factor of two. This flexibility allows for the implementation of high-rate qLDPC codes that encode over 1,000 logical qubits with significantly fewer physical resources.

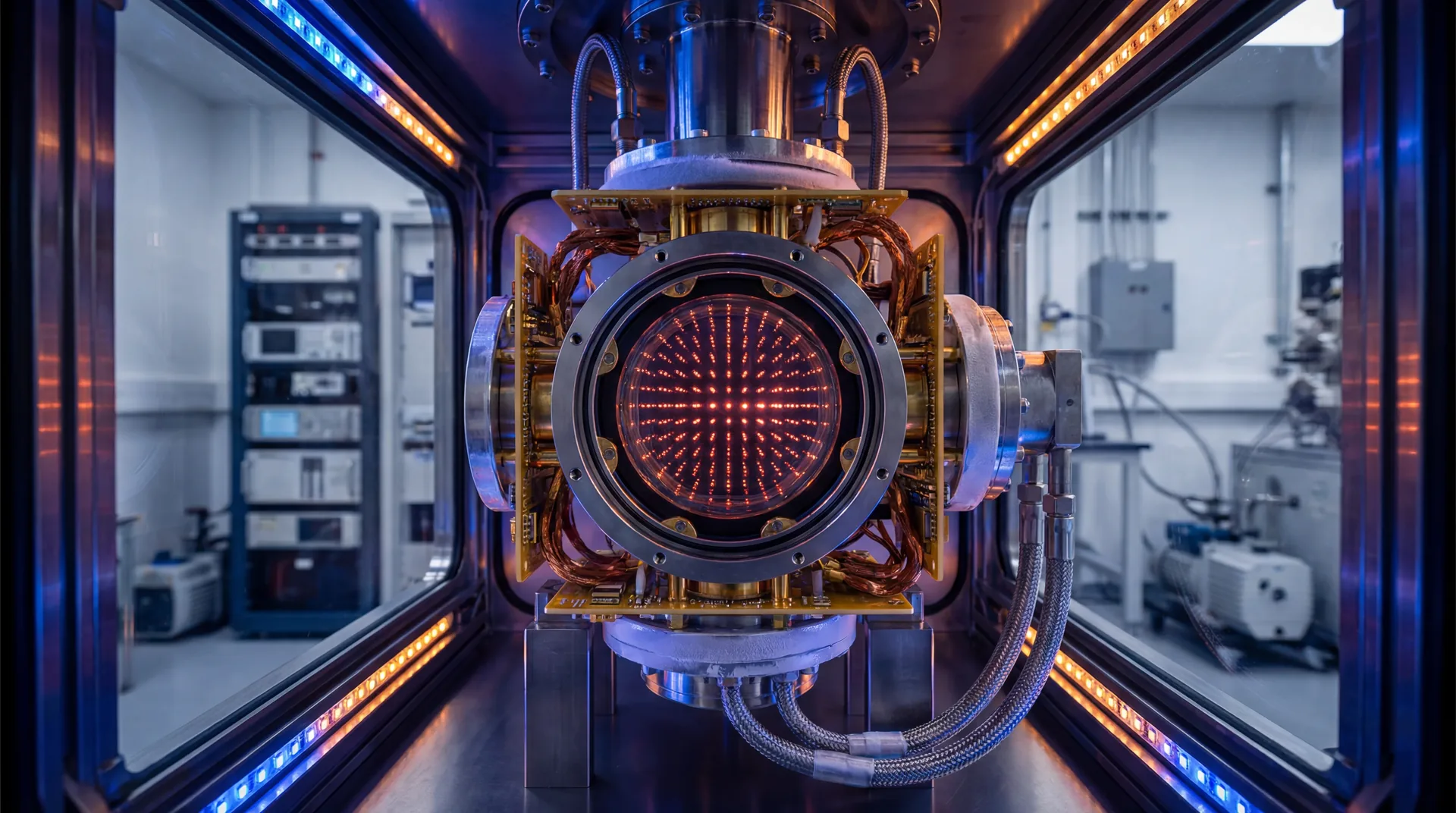

These processors leverage the unique ability to physically move atoms during a computation, a feature known as reconfigurability. According to the research, this allows for non-local connectivity without the need for complex, static wiring. The authors point out that neutral-atom experiments have already demonstrated universal fault-tolerant operations and the ability to trap arrays containing more than 6,000 highly coherent qubits. This architecture is uniquely suited for the high-rate codes necessary to execute Shor's algorithm with minimal physical hardware.

Why is 10,000 the new 'magic number' for Shor’s algorithm?

The number 10,000 has emerged as the new benchmark because it represents the minimum physical qubit count required to execute Shor’s algorithm using high-rate error-correcting codes. By leveraging efficient logical instruction sets and residue number system arithmetic, the study confirms that 10,000 reconfigurable atomic qubits are sufficient to challenge RSA-2048 levels of security. This theoretical leap is enabled by the high encoding efficiency of qLDPC codes, which maximize the utility of each physical atom.

The researchers utilized a highly optimized circuit design to reach this 10,000-qubit threshold. Key findings in the study include:

- Encoding Rates: qLDPC codes achieve up to 30% efficiency, drastically reducing physical overhead.

- Logical Qubits: The architecture supports the creation of over 1,000 logical qubits within a 10,000-atom array.

- Instruction Sets: The use of efficient logical gates minimizes the depth of the quantum circuit.

- Error Resilience: The design maintains low block error rates comparable to traditional, less efficient surface codes.

How long until quantum computers threaten global cybersecurity?

Quantum computers could threaten global cybersecurity within a few years to a decade, as new architectures are projected to break RSA-2048 with as few as 10,000 to 100,000 qubits. Current estimates suggest that a system with 26,000 qubits could solve the P-256 elliptic curve discrete logarithm problem in just a few days. While RSA-2048 factoring would take longer, the rapid scaling of neutral-atom processors suggests these milestones are approaching faster than anticipated.

The runtime for these cryptographic challenges depends heavily on the degree of parallelism within the quantum hardware. In their analysis, Picard, Endres, and Bluvstein explain that while 10,000 qubits is the baseline for possibility, increasing the qubit count to roughly 26,000 would allow for a significant acceleration in quantum computing performance. For instance, the discrete logarithms used in elliptic curve cryptography—which secures much of the modern web—could be compromised in a timeframe measured in days rather than years.

Analyzing the Timeline to a Functional Quantum Threat

A major distinction must be made between theoretical laboratory milestones and the deployment of a functional, cryptographically relevant quantum computer. While the research highlights that 10,000 qubits is theoretically sufficient, reaching this goal requires overcoming substantial engineering hurdles. The neutral-atom approach must still prove it can maintain high fidelity and coherence as arrays scale from the current 6,000-qubit experimental setups to the 10,000-plus qubits required for Shor's algorithm.

Despite these challenges, the pace of development is accelerating. The study notes that recent experiments have already achieved universal fault-tolerant operations below the critical error-correction threshold. If the current trajectory of quantum hardware development continues, the "Doomsday Clock" for modern encryption may indeed be ticking faster than the cybersecurity industry is currently prepared for, making the search for quantum-resistant solutions more urgent than ever.

Preparing for the Post-Quantum Cryptography Era

The realization that 10,000 qubits could dismantle contemporary security protocols has heightened the urgency for post-quantum cryptography (PQC). Government agencies and standards bodies, such as NIST, are already in the process of finalizing new algorithmic standards designed to withstand quantum attacks. These new standards rely on mathematical problems—such as lattice-based cryptography—that are believed to be resistant to the speedup provided by Shor's algorithm.

For businesses and government entities, the transition to quantum-resistant architecture is no longer a distant concern but a present-day priority. Data that is encrypted today and stored by malicious actors could be decrypted in the near future once a 10,000-qubit neutral-atom processor becomes operational. This "harvest now, decrypt later" strategy makes the findings by Picard, Endres, and Bluvstein a call to action for the immediate adoption of cryptographic agility and modern security standards.

The Future of Fault-Tolerant Quantum Computing

Looking ahead, the implications of this research extend far beyond the narrow scope of breaking encryption. The ability to perform complex, fault-tolerant quantum computing tasks with relatively small hardware footprints opens the door to a wide range of scientific applications. From drug discovery to materials science, the neutral-atom architecture described in this study could democratize access to high-performance quantum resources by lowering the entry barrier for physical hardware requirements.

Future research will likely focus on refining the qLDPC codes and improving the physical fidelities of the atomic traps. As Manuel Endres and his team have shown, the path to a practical quantum advantage is not just about building bigger machines, but about building smarter ones. By optimizing the intersection of quantum error correction, circuit design, and atomic physics, the scientific community is rapidly closing the gap between quantum theory and cryptographic reality.

Comments

No comments yet. Be the first!