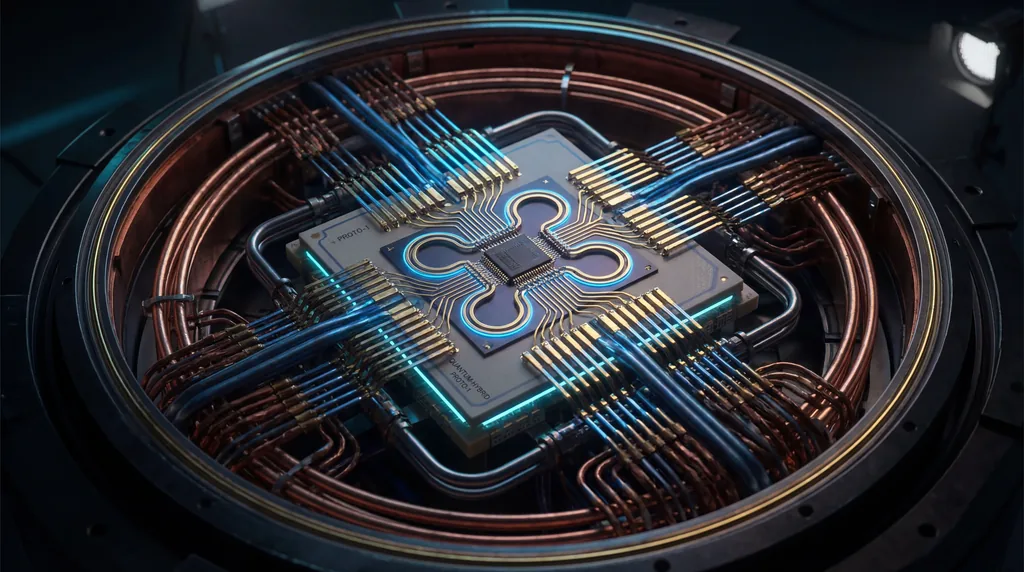

The connection between Large Hadron Collider (LHC) data and quantum computing is defined by the inherent quantum nature of high-energy particle interactions, where collisions generate complex quantum states like entanglement and "magic" that serve as fundamental resources for computation. By treating the LHC as a massive quantum simulator, scientists can map subatomic vacuum amplitudes directly onto qubits, bridging the gap between high-energy physics and information science. This synergy allows researchers to utilize the extreme energies of CERN experiments to study quantum information theory in ways that were previously considered purely theoretical.

Richard Feynman famously proposed in 1981 that to accurately simulate the complexities of nature, one must utilize the principles of quantum mechanics. For decades, the Large Hadron Collider has been viewed primarily as a tool for discovering new particles, such as the Higgs boson, through classical data analysis methods. However, a new research paradigm, spearheaded by German Rodrigo and colleagues, argues that high-energy colliders are genuine quantum machines. This shift moves the field away from treating collider data as classical signals and instead embraces the underlying quantum amplitudes as a substrate for Quantum Computing.

Recent studies demonstrate that the Large Hadron Collider functions as a sophisticated quantum simulator capable of solving the universe's most complex computational problems. By aligning with Feynman's foundational vision, collider physics has emerged as a prime candidate for testing quantum algorithms. These prospective applications include Quantum Machine Learning for data analysis, accelerated evaluation of multiloop Feynman diagrams, and enhanced simulations of the "parton showers" that occur when particles decay. The ability to translate these physical processes into a digital quantum format marks a significant milestone in high-energy physics.

Why use quantum machine learning for collider data analysis?

Quantum Machine Learning (QML) is utilized for collider data analysis because it offers superior efficiency in processing the massive, multidimensional datasets generated by the High-Luminosity LHC that overwhelm classical systems. QML enables hybrid quantum-classical approaches for near-term devices, handling classically intractable calculations such as event reconstruction and jet clustering. These algorithms exploit quantum advantages in pattern recognition to optimize data workflows and improve the precision of particle identification.

Massive data volumes at CERN present a significant challenge for classical computing architectures, particularly as particle "pile-up" increases. In current experimental setups, the task of track reconstruction scales quadratically with the number of particles, leading to a computational bottleneck. Quantum Machine Learning algorithms are designed to handle this complexity by utilizing quantum superposition to evaluate multiple reconstruction paths simultaneously, ensuring that physicists can maintain high levels of accuracy as the LHC increases its luminosity.

Jet clustering and particle identification are also seeing radical improvements through quantum optimization. In a typical collision, quarks and gluons produce collimated sprays of particles known as jets; identifying the origin of these jets is critical for discovering new physics. Quantum Computing provides specialized algorithms that can partition these complex sprays of energy more efficiently than classical clustering techniques. This enhancement allows for more granular analysis of rare subatomic events that might otherwise be lost in the noise of standard data processing.

How do quantum computers accelerate Feynman diagram calculations?

Quantum computers accelerate Feynman diagram calculations by providing a quadratic speedup over classical methods using techniques such as Quantum Monte Carlo Integration and the Loop-Tree Duality. These systems simulate the complex quantum dynamics of particle interactions more efficiently than classical simulations by mapping the causal structures of multiloop vacuum amplitudes directly onto quantum circuits. This approach allows researchers to evaluate high-order perturbative processes that are currently computationally prohibitive for classical supercomputers.

Multiloop Feynman diagrams represent the mathematical backbone of perturbative physics, yet their complexity grows exponentially with every additional loop. German Rodrigo highlights that identifying the causal structures within these diagrams is a fundamental component of the Loop-Tree Duality, which exhibits deep connections to graph theory. By utilizing Quantum Computing, researchers can represent these loops as interconnected nodes in a quantum circuit, allowing the system to find the "causal" solution—the most physically relevant result—far faster than classical iterative methods.

Vacuum amplitudes, which describe the behavior of quantum fields in their lowest energy state, are essential for calculating the cross-sections of particle interactions. The research indicates that mapping these amplitudes to qubits allows for the direct simulation of the underlying quantum field theory. This methodology bypasses the need for the massive mathematical expansions required in classical physics, effectively using the quantum hardware to "mimic" the behavior of the particles themselves. This is the ultimate realization of the quantum simulation goals first proposed by Richard Feynman.

High-Dimensional Integration and Sampling

High-dimensional function integration remains one of the most significant computational hurdles in modern particle physics. To predict what happens at the Large Hadron Collider, theorists must integrate over hundreds of variables representing the momentum and spin of every particle produced in a collision. Quantum algorithms offer a path forward by providing more precise sampling of these high-dimensional spaces. This is a critical step toward realizing a fully fledged "quantum event generator," a software suite capable of simulating LHC collisions at high perturbative orders with unprecedented accuracy.

Quantum event generators will eventually replace the classical Monte Carlo simulations currently used by experimentalists at CERN. While classical generators are reliable, they struggle with the precision required to detect subtle deviations from the Standard Model. A quantum-based generator would inherently account for quantum interference and entanglement, providing a more faithful representation of the subatomic world. This shift is expected to enhance the sensitivity of experiments searching for dark matter and other elusive phenomena beyond our current understanding.

The Future of Particle Physics and Quantum Integration

Future implications for the field suggest a deepening synergy between CERN's experimental hardware and emerging quantum software. The long-term roadmap for quantum-enhanced collider experiments involves integrating quantum processors directly into the data acquisition pipelines. This would allow for "real-time" quantum analysis of collisions, potentially identifying groundbreaking physics the moment it occurs. As Quantum Computing hardware matures, the boundary between the particle accelerator and the quantum computer will continue to blur.

- Standard Model Verification: Quantum simulations will provide the precision needed to test the limits of current physical laws.

- Beyond the Standard Model: Enhanced data analysis may reveal evidence of supersymmetry or extra dimensions.

- Algorithmic Efficiency: New quantum algorithms for physics will have spillover effects in fields like chemistry and materials science.

- Infrastructure Synergy: CERN is increasingly becoming a hub for quantum information science as well as high-energy physics.

Expertise in quantum simulations is no longer just a theoretical pursuit; it is becoming a requirement for the next generation of physicists. The work of researchers like German Rodrigo demonstrates that the infrastructure of the Large Hadron Collider is uniquely suited to the quantum era. By treating every collision as a computational event, the scientific community is finally unlocking the full potential of Richard Feynman’s 1981 vision, ensuring that the study of the smallest particles in the universe continues to drive the most advanced technological leaps in computing.

Comments

No comments yet. Be the first!