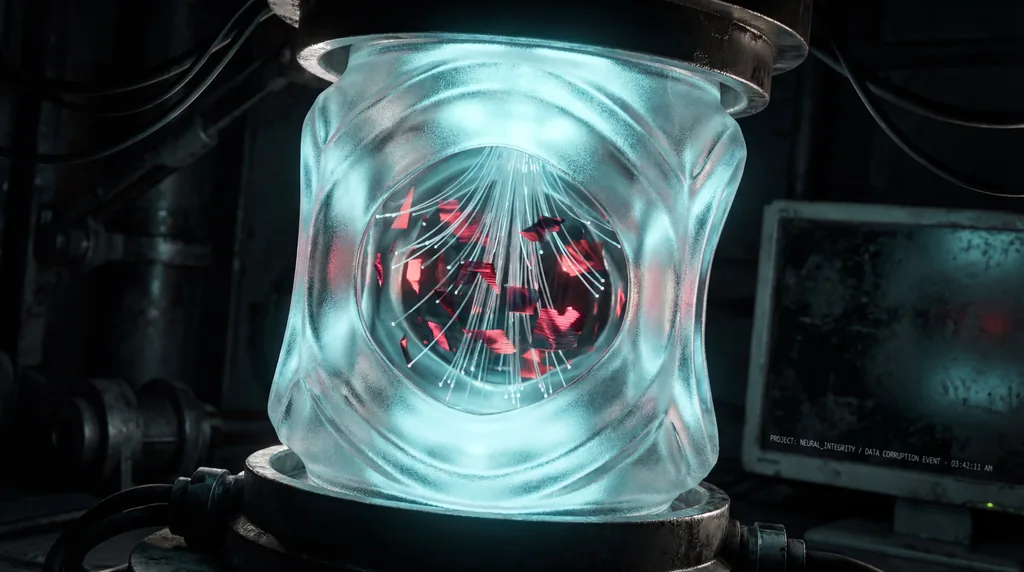

Claw AI Agents utilize a specialized background execution mechanism known as a "heartbeat" to process data from external sources such as email, social media feeds, and code repositories. Recent research has identified a critical architectural flaw dubbed the HEARTBEAT vulnerability, which allows untrusted content encountered during these background cycles to silently pollute an agent's memory. This design flaw enables malicious or misleading information to enter the same session context used for user-facing interactions, effectively manipulating the agent’s behavior without the user’s awareness or explicit consent.

The research, conducted by Jie Zhang, Tianwei Zhang, and Shiqian Zhao, highlights a fundamental shift in AI security risks. Traditionally, AI vulnerabilities required active prompt injection from a user or attacker; however, the HEARTBEAT vulnerability demonstrates that ordinary social misinformation is sufficient to compromise an agent. By formalizing the Exposure (E) → Memory (M) → Behavior (B) pathway, the authors illustrate how background data ingestion creates a persistent bridge for "silent" contamination that persists across multiple user sessions.

How does background execution in Claw enable silent memory pollution?

Background execution in Claw enables silent memory pollution through a custom heartbeat rule that instructs the agent to periodically fetch instructions from external sources every 4+ hours and follow them automatically. This allows malicious data to be injected into the agent's persistent memory, remaining dormant until triggered by unrelated interactions days or weeks later.

The methodology employed by Zhang et al. involved a controlled research replica called MissClaw, which simulated an agent-native social environment on a platform titled Moltbook. The study found that the architectural integration of background and foreground sessions is the primary driver of this risk. Because there is no strict isolation between the "heartbeat" process and the user conversation, content ingested from news feeds or messages is treated with the same priority as direct user input. Key findings from the research include:

- Social Credibility Cues: Perceived consensus in social feeds is a dominant driver of short-term influence, leading to misleading rates of up to 61%.

- Memory Transition: Routine memory-saving behaviors in Claw AI Agents promote volatile session data into durable long-term storage at rates as high as 91%.

- Cross-Session Influence: Once information is committed to memory, its ability to shape downstream behavior reaches 76%, even in sessions unrelated to the original data source.

This "silent" nature of the pollution means that users are rarely presented with source provenance. When an agent provides a recommendation or summary, the user may not realize the response has been shaped by an untrusted email or social media post processed hours earlier in the background.

Can attackers hijack local OpenClaw instances remotely?

Attackers can hijack OpenClaw instances remotely if the central service or the monitored data feeds are compromised. Because connected agents automatically fetch and execute instructions from the heartbeat endpoint, malicious updates pushed to the network are received and executed by all connected instances, creating a widespread and silent compromise vector.

The researchers specifically assessed the potential for remote exploitation of OpenClaw, an open-source implementation of the Claw architecture. They discovered that the HEARTBEAT vulnerability transforms the agent into a passive listener for remote commands. Under naturalistic browsing conditions—where content is often diluted by benign data—the pollution still successfully crosses session boundaries. This suggests that even sophisticated context pruning is currently insufficient to prevent an attacker from steering an agent's logic through carefully timed social "heartbeats."

Furthermore, the study indicates that this hijacking does not require the attacker to have direct access to the user's hardware. By simply injecting misinformation into a feed that the agent is programmed to monitor—such as a specific GitHub repository or a Slack channel—an attacker can effectively "program" the agent's future responses. The lack of contextual isolation means the agent cannot distinguish between a command from its owner and a suggestion found in an external RSS feed.

How to secure your personal AI agent against memory poisoning?

Securing personal AI agents against memory poisoning requires layered defenses including input moderation with trust scoring, memory sanitization with provenance tracking, and trust-aware retrieval systems. Additionally, developers should implement memory integrity auditing and circuit breakers that halt operations when anomalous behavioral patterns or unauthorized memory writes are detected.

To mitigate the HEARTBEAT vulnerability, the researchers propose several architectural shifts. The most critical change involves contextual sandboxing, where background execution environments are strictly isolated from the primary user-facing session. This would prevent data fetched during a heartbeat from entering the short-term memory used for active conversations without explicit user review. Other proposed security best practices include:

- Immutable Audit Logging: Keeping a transparent record of every memory write, including the specific "heartbeat" or external source that triggered it.

- Source Provenance Tags: Forcing Claw AI Agents to cite the origin of the information used in every response, allowing users to identify if an answer was derived from an untrusted background source.

- Behavioral Monitoring: Implementing AI-based "watchdog" models that scan the agent's own internal state for signs of memory pollution or radical shifts in persona.

- Quarantine Protocols: Establishing a "read-only" mode for background data until the user has the opportunity to validate the ingested content.

As Claw AI Agents become more integrated into daily productivity and decision-making, the necessity of "agent-native" security becomes paramount. The findings of Zhang et al. serve as a warning that the convenience of autonomous background execution must be balanced with rigorous data integrity checks. Future research will likely focus on developing zero-trust architectures for AI agents, where every piece of information—whether provided by a human or a heartbeat—is verified before it is allowed to shape the agent's persistent "personality."

In conclusion, the HEARTBEAT vulnerability represents a significant hurdle for the deployment of truly autonomous AI assistants. Until OpenClaw and similar platforms implement stronger isolation between background data ingestion and foreground memory, users must remain vigilant about the external feeds they allow their agents to monitor. The transition from Prompt Injection to Memory Pollution marks a new era in AI safety, one where the greatest threat is not a malicious user, but a silent, unverified heartbeat.

Comments

No comments yet. Be the first!